As artificial intelligence (AI) continues to shape the future of industries, data centers play an increasingly pivotal role in powering these technologies. With the explosive growth of AI applications, from machine learning and natural language processing to autonomous systems and robotics, the energy demand for data centers is soaring. This article will go into the latest developments in AI data center energy trends, challenges, and solutions, with a focus on sustainability, efficiency, and the future of computing infrastructure.

Artificial intelligence demands significant computational resources, which directly impacts data centers. The more advanced AI models become, the more processing power they require. In particular, large-scale models, such as GPT-4 and other deep learning systems, necessitate vast amounts of energy for both training and inference phases. As AI continues to grow in relevance and usage, data centers are becoming the backbone of AI operations, fueling not only cloud-based services but also edge computing solutions.

AI’s ability to revolutionize industries like healthcare, finance, and transportation also increases the pressure on data centers to handle large datasets, high-frequency requests, and computationally intensive tasks. This surge in demand has led to heightened concerns about the environmental impact of these facilities. Data centers consume about 1% of the global electricity, and the need for greater energy efficiency is now more urgent than ever.

Data centers typically require a continuous supply of power to support servers, networking equipment, storage systems, and cooling mechanisms. A standard AI model training process can involve tens of thousands of GPU processors, all of which need to operate around the clock, consuming massive amounts of electricity.

While AI-powered applications can drive innovation across multiple industries, they also bring about several energy consumption challenges. The training process alone for large AI models is highly resource-intensive. For example, training a state-of-the-art deep learning model for language translation or image recognition can take days or even weeks, using large clusters of GPUs or specialized hardware like TPUs (Tensor Processing Units).

Moreover, as more businesses adopt AI, more data centers are being built to meet these demands, further escalating energy consumption. According to a report by the International Energy Agency (IEA), data centers’ energy use could triple by 2030 if no improvements are made to energy efficiency.

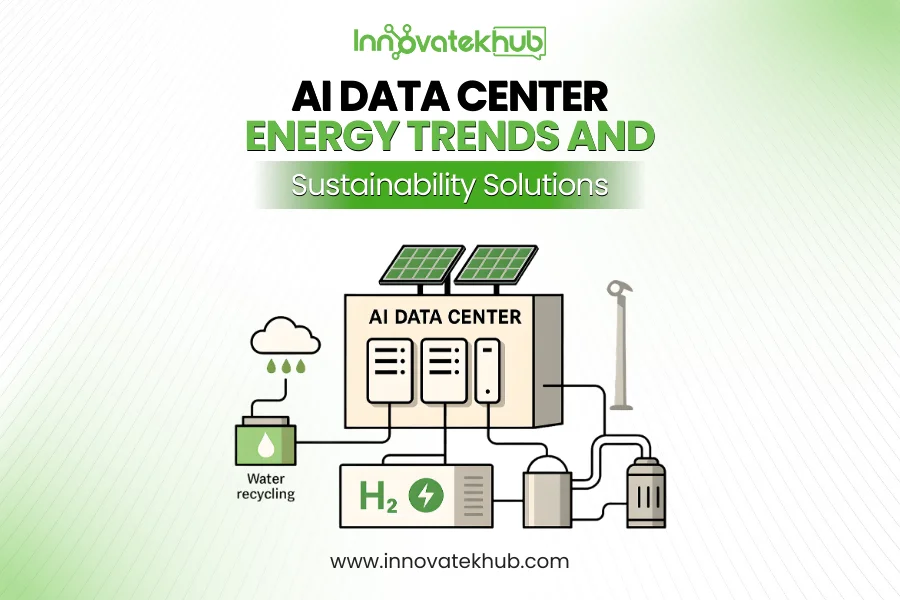

As the environmental footprint of AI data centers becomes a growing concern, companies are actively exploring ways to mitigate energy consumption while maintaining optimal performance. Several key innovations in energy management and sustainability practices are making AI data centers more eco-friendly and cost-efficient.

One of the most effective ways to reduce the carbon footprint of AI data centers is through the use of renewable energy sources. Companies like Google, Amazon Web Services (AWS), and Microsoft are already leading the way by transitioning their data centers to 100% renewable energy. This shift is part of a broader movement toward sustainability in the tech industry, driven by both environmental responsibility and the economic advantages of renewable energy.

The integration of solar, wind, and hydroelectric power into data center operations is not only environmentally beneficial but also cost-effective in the long run. As renewable energy technology advances, the cost of harnessing these resources continues to drop, making them more accessible for companies looking to reduce their reliance on fossil fuels.

Interestingly, AI itself can be a solution to the energy consumption challenge. Machine learning algorithms can be used to optimize the operation of data centers, making them more energy-efficient. For example, AI-powered systems can dynamically manage the power usage of servers, cooling systems, and other components based on real-time demand. This is crucial in avoiding energy waste during off-peak times or adjusting operations to reduce energy use during periods of high load.

Companies are leveraging AI to optimize the cooling process as well. Cooling systems are a major contributor to the energy demand of data centers, often requiring additional power to maintain optimal operating temperatures for hardware. By using AI algorithms to predict temperature changes and adjust cooling settings accordingly, data centers can significantly reduce the energy spent on cooling, which can account for up to 40% of the total energy consumption in some facilities.

As AI processing power continues to grow, traditional air-based cooling methods are proving insufficient. Liquid cooling systems are emerging as an innovative solution to this challenge. These systems involve circulating liquid through a server’s components to dissipate heat more effectively than air cooling.

Liquid cooling not only improves the efficiency of data centers but also reduces their overall energy consumption. By efficiently transferring heat from hardware components, liquid cooling minimizes the need for excessive airflow and, in turn, reduces the demand for cooling systems. This is particularly important in AI-driven data centers, where high-performance GPUs and specialized chips can generate immense amounts of heat during operation.

Another area where AI data centers are innovating to reduce energy consumption is in the hardware itself. AI workloads require specialized hardware, such as GPUs and TPUs, to perform complex computations. These processors are designed to handle parallel tasks, which can increase energy efficiency compared to traditional CPUs.

However, the evolution of energy-efficient hardware goes beyond GPUs and TPUs. In recent years, new technologies have emerged that promise to make hardware more energy-efficient, such as neuromorphic computing, quantum computing, and optical computing. These technologies aim to mimic the efficiency of the human brain or leverage quantum mechanics to perform computations more efficiently, offering promising avenues for reducing the energy consumption of AI data centers in the future.

Edge computing is another key trend that is helping to address energy concerns in AI-powered environments. Edge computing involves processing data closer to the source of the data generation, rather than transmitting all data to centralized cloud data centers for processing. By offloading tasks to edge devices, the overall strain on data centers is reduced, resulting in less energy consumption and lower latency.

Edge computing is especially beneficial in scenarios where real-time processing is crucial, such as autonomous vehicles, IoT devices, and industrial applications. This decentralized approach not only reduces energy consumption but also improves the overall efficiency of AI systems by allowing for faster processing times.

Despite the progress being made in AI data center energy optimization, there are still significant challenges that need to be addressed to ensure a sustainable future. One of the primary concerns is the continued growth in demand for AI computing power. As AI becomes more prevalent in various industries, the demand for data centers and computational resources will continue to increase, further straining energy systems.

Another challenge is the reliance on hardware that is not yet optimized for energy efficiency. While significant strides have been made in developing energy-efficient processors, much of the hardware currently used in data centers is still built on older architectures, which are not as energy-efficient as newer models. Transitioning to more sustainable hardware solutions will require significant investment and research.

Finally, there are geopolitical challenges related to the development and distribution of renewable energy. While some regions are abundant in renewable resources, others may not have access to them, creating imbalances in energy availability and driving up costs for some data centers.

As the demand for AI continues to rise, governments and regulatory bodies around the world are recognizing the need to address the environmental impact of data centers. Many countries are implementing regulations to promote energy efficiency and reduce carbon emissions in the tech sector.

The European Union, for example, has introduced regulations requiring companies to disclose the energy consumption and environmental impact of their data centers. Additionally, governments are offering incentives and subsidies for businesses that adopt renewable energy and energy-efficient technologies.

While regulations are still evolving, the role of policy in shaping the future of AI data center energy usage is expected to grow. By encouraging companies to adopt more sustainable practices, policymakers can help ensure that the growth of AI does not come at the expense of the planet.

The future of AI data center energy will be shaped by continued advancements in both hardware and software. As AI becomes more integrated into daily life and industries, the energy demands of data centers will only increase. However, with the right combination of renewable energy, innovative cooling technologies, AI-powered optimization, and sustainable hardware, data centers can meet these challenges and play a key role in creating a more sustainable future.

The continued growth of edge computing, along with the emergence of new hardware technologies like neuromorphic and quantum computing, will also play a crucial role in reducing energy consumption. By decentralizing data processing and improving the efficiency of AI hardware, the industry can move closer to a future where AI and sustainability go hand in hand.

As AI continues to transform industries and societies, the energy consumption of AI data centers will remain a critical issue. However, with technological advancements and a growing commitment to sustainability, the future of AI data centers can be both efficient and eco-friendly. By embracing renewable energy, optimizing operations with AI, adopting liquid cooling, and investing in energy-efficient hardware, data centers can reduce their environmental impact and help drive the AI revolution toward a greener future.

The journey toward sustainable AI data centers is ongoing, but the steps taken today will pave the way for a more energy-efficient and environmentally conscious future in computing.

No Comments